Frosty AI is an LLM-agnostic platform founded in 2026, designed to simplify how developers and enterprises build, deploy, and manage large language model systems across multiple providers. It removes the complexity of working with isolated APIs and introduces a unified orchestration layer for modern AI applications.

- What is Frosty AI and Why It Matters

- Rising Market Pressure and AI-Driven Platform Shift

- Key Features of Frosty AI

- Hybrid Routing Intelligence

- Real Time Analytics for AI Optimization

- Cost Optimization in Enterprise AI Systems

- Benefits of Frosty AI for Developers

- Comparative Overview of Multi-Model Orchestration

- FAQs

- Conclusion

Frosty AI enables organizations to connect with leading model providers such as OpenAI, Anthropic, and Mistral through a single interface. As businesses face rising costs and reduced flexibility in the AI ecosystem, this platform offers a strategic alternative that supports scalability, observability, and intelligent routing.

What is Frosty AI and Why It Matters

Frosty AI is a unified orchestration platform that allows organizations to manage multiple large language models without vendor dependency. It acts as a middleware layer that simplifies integration and ensures seamless switching between AI providers based on performance and cost efficiency.

In the current AI landscape, many enterprises are experiencing a slowdown in innovation due to over-reliance on single provider ecosystems and increasing operational costs. Frosty AI addresses this challenge by enabling flexible model usage, improved governance, and consistent performance tracking across all deployments.

Rising Market Pressure and AI-Driven Platform Shift

The growing adoption of generative AI has created a saturated ecosystem where differentiation is becoming harder for standalone tools. Many platforms are experiencing reduced traction due to rapid advancements in foundational models offered directly by major providers.

Frosty AI responds to this shift by positioning itself as an infrastructure layer rather than a competing model provider. It helps enterprises avoid operational fragmentation while ensuring long-term adaptability in an AI-dominated market.

Key Features of Frosty AI

- Unified API access to multiple LLM providers

- Intelligent request routing based on performance metrics

- Centralized usage monitoring and cost tracking

- Scalable cloud native infrastructure

- Secure multi-tenant environment

This structure allows enterprises to reduce integration complexity while improving system reliability and flexibility.

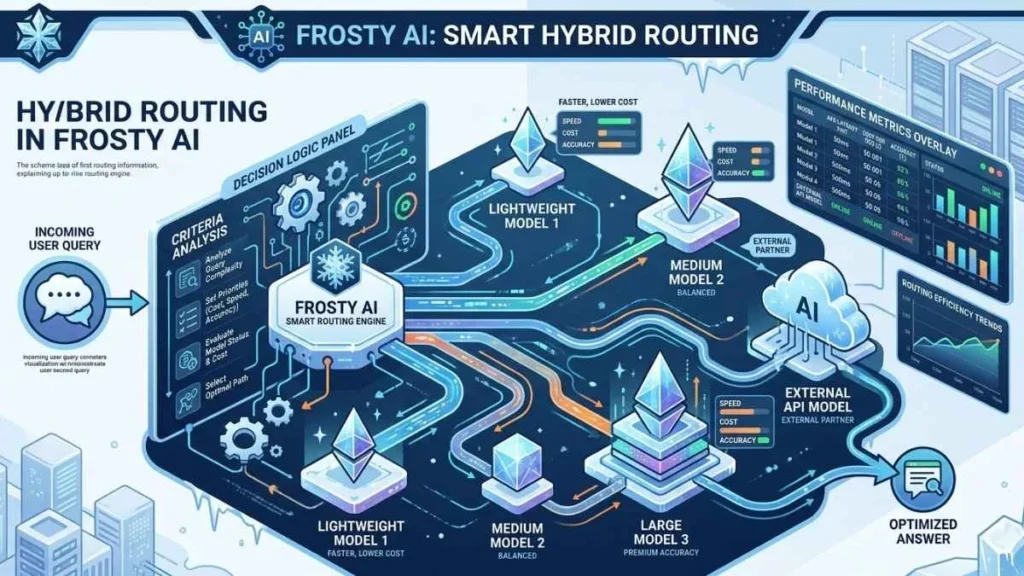

Hybrid Routing Intelligence

Hybrid routing is one of the most important components of it. It dynamically selects the most suitable model for each request based on cost, latency, and output quality requirements.

It evaluates multiple parameters in real time to ensure optimal decision-making. This reduces unnecessary API expenses while improving response accuracy for critical workloads. It also supports fallback mechanisms in case a primary model becomes unavailable.

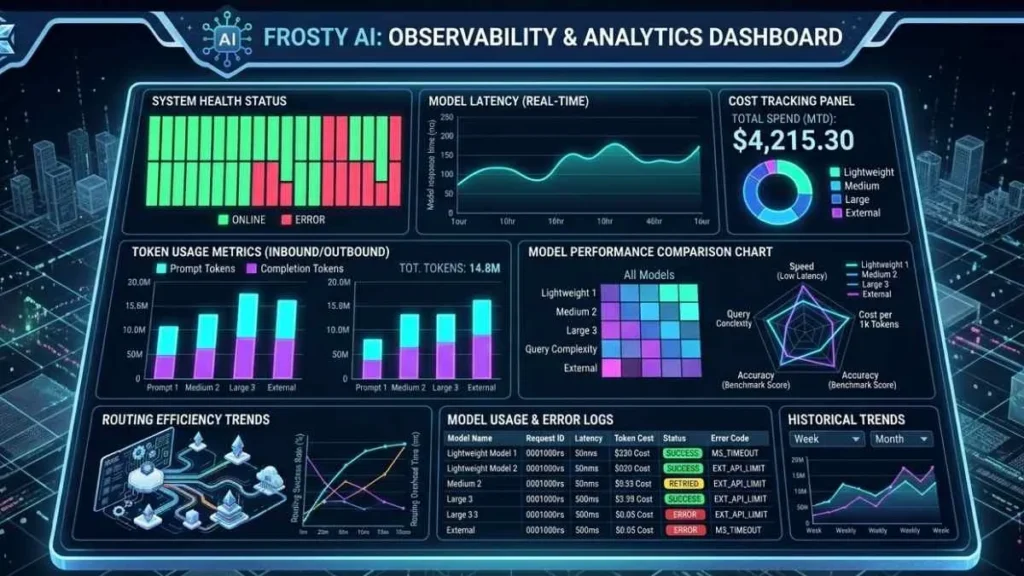

Real Time Analytics for AI Optimization

Frosty AI helps organizations identify inefficiencies and optimize workload distribution. By analyzing historical trends, businesses can refine their AI strategies and allocate resources more effectively. This leads to better financial control and improved operational transparency.

Token Usage Monitoring

Frosty AI tracks token consumption across different models and applications in real time. This helps organizations understand where resources are being consumed and identify optimization opportunities for reducing unnecessary usage.

Response Time and Latency Analysis

The platform continuously measures latency across all integrated LLM providers. This enables businesses to compare performance and route requests to faster models when required for time-sensitive applications.

Cost Distribution Insights

Frosty AI breaks down AI spending across departments, applications, and workloads. This allows decision makers to clearly see where budgets are being allocated and adjust usage patterns to reduce costs.

Historical Performance Trends

The system stores long-term performance data to help organizations analyze how model behavior changes over time. These insights support better forecasting and strategic planning for AI adoption.

Cost Optimization in Enterprise AI Systems

One of the biggest challenges in AI adoption is controlling operational expenses. Frosty AI introduces intelligent cost optimization mechanisms that automatically route requests to more efficient models when appropriate.

It provides detailed cost breakdowns by application, department, and usage pattern. Organizations can set budget thresholds and receive alerts when spending exceeds predefined limits. This ensures financial predictability while maintaining performance standards.

Benefits of Frosty AI for Developers

- Simplified API integration across multiple LLMs

- Faster experimentation with different models

- Built-in testing and staging environments

- Consistent deployment workflows

- Reduced maintenance overhead

These capabilities accelerate development cycles and improve productivity for engineering teams working with generative AI systems.

Comparative Overview of Multi-Model Orchestration

| Feature Area | Traditional LLM Setup | Frosty AI Approach |

| Model Access | Single provider dependency | Multi-provider integration |

| Cost Control | Manual optimization | Automated routing system |

| Observability | Limited insights | Full request-level tracing |

| Scalability | Provider constrained | Infrastructure independent |

| Flexibility | Low adaptability | High model flexibility |

FAQs

What is Frosty AI used for?

Frosty AI is used to manage, deploy, and optimize multiple large language models from different providers in one unified platform.

Does Frosty AI support multiple AI providers?

Yes, it integrates with providers like OpenAI, Anthropic, and Mistral for flexible multi-model usage.

How does Frosty AI reduce AI costs?

It reduces costs through intelligent routing, selecting the most efficient model based on performance and pricing.

Conclusion

Frosty AI represents a major shift in how organizations interact with large language models by introducing a unified orchestration layer that reduces complexity and improves efficiency. It enables enterprises to move beyond fragmented AI systems and adopt a more structured and scalable approach.

Frosty AI is positioned as a foundational infrastructure layer that supports long term AI transformation across industries. Frosty AI empowers businesses to optimize costs, enhance performance, and maintain full control over their AI ecosystems in an increasingly competitive environment.